Design your news stream

In a discussion group, a question about websites people frequent daily led me to explore the nature of news consumption. The pace of content production is ever increasing and new media sources are appearing daily. It is important to investigate, understand and streamline our consumption patterns.

Are you a fundamental analyst looking to for an edge in a dense space? Are you a devoted Hacker News reader who would like to explore other interests beyond startups and technology? Or perhaps you are still subscribed to dead trees and worried that your favorite paper is preparing to go digital? Read on.

I propose looking at news creation and distribution through five nearly orthogonal stages: observation, reporting, sharing, curation and consumption.

Observation

Observation or recording of an event or conception of an idea leads to new information that we are willing to distribute.

Reporting

Through reporting, information is turned into a story or explanation. This could be through professional journalism, blogging or even public recitation.

Sharing

News is shared via word of mouth, social networks and traditional media.

Curation

Selecting the most relevant content for consumption. We have seen social curation (Hacker News), hand-curation (traditional media providers) and machine-curation (Prismatic).

Consumption

When news are digital, mode of consumption can be varied at the leisure of the consumer. From reading off a tablet while lounging at the couch to elevated real-time television dashboards and voice-over, we expect the same content to be responsively accessible in many ways.

Consumption is more a feature of our environment context rather than a conscious choice, so the most interesting stage for us as content consumers to explore is curation.

Social curation

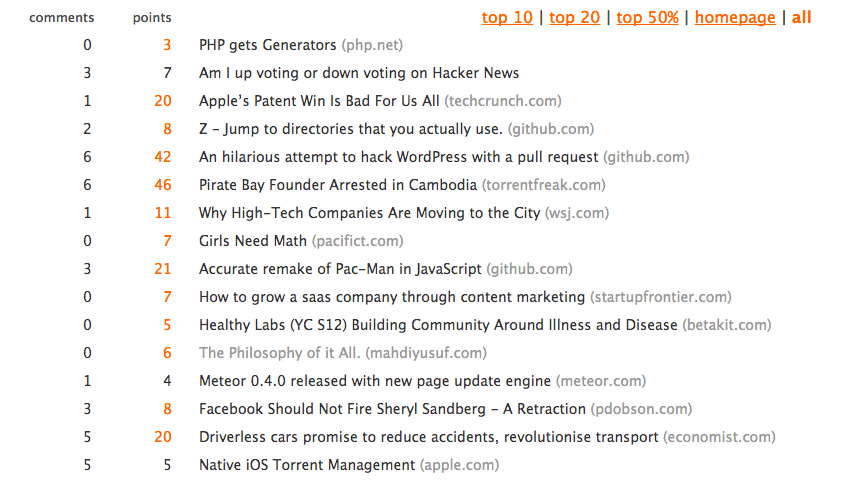

Social curation websites like reddit or Hacker News allow users to post news which are then rated by other users through upvotes and comments. Top rated news will show up on the homepage.

hckrnews is an unofficial Hacker News client.

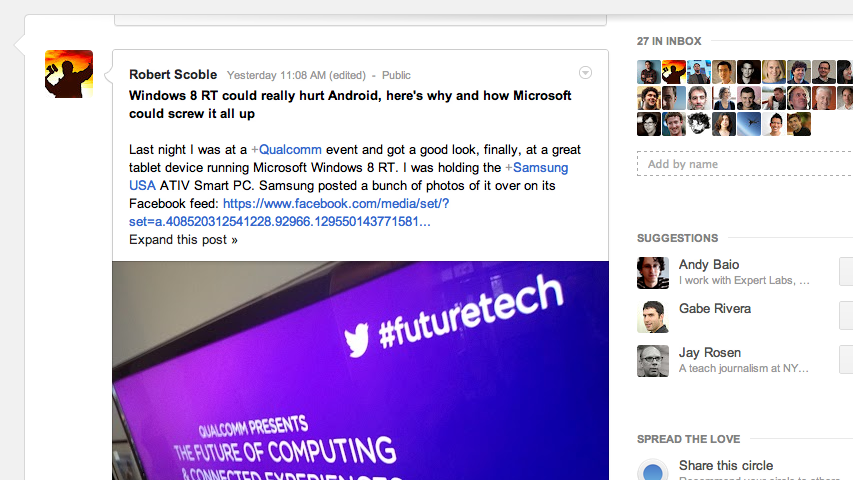

Social networks like Google+ are examples too. You hand-curate and follow a set of people and you get all the news they share along with everything else.

Machine curation

Machine curation is an even more recent paradigm where news are selected by machine learning algorithms. In addition to numeric social metrics such as shares and likes they can be looking at influence scores, topic relevance, source credibility among other things. Your Facebook stream is an example of this.

Prismatic lets you select both topics and individual content providers (blogs or news publishers). It then uses social signals from people in your networks to curate, yielding a highly personalized real-time stream.

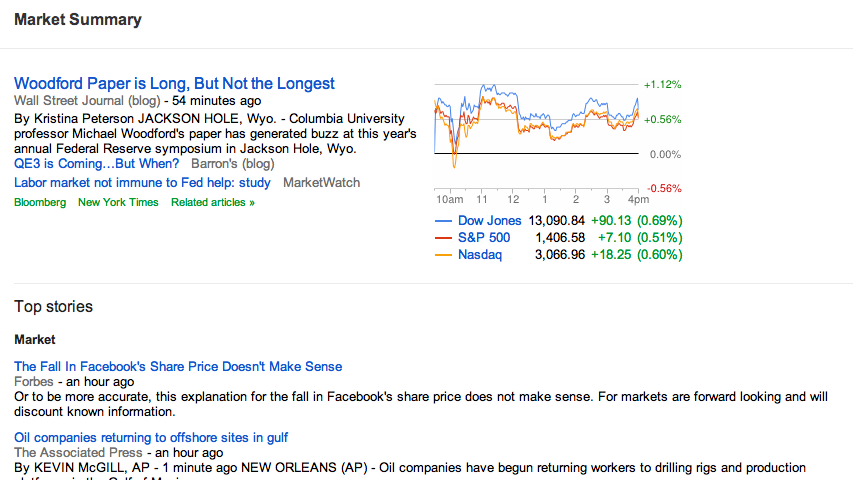

Google finance aggregates news relevant to financial markets. Sources are presumably selected by experts.

Manual curation

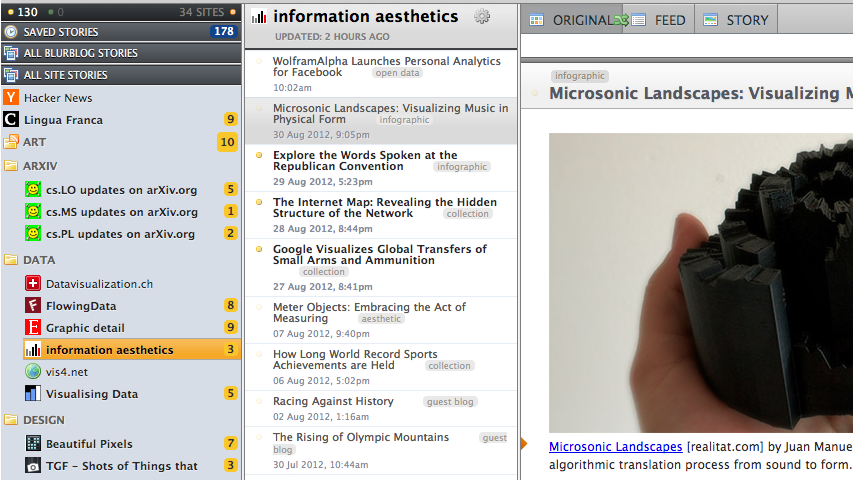

Many people still source from a set of RSS feeds. There are tools like Newsblur that permit filtering by tags and other metadata allowing you to fine-tune what types of content from a given provider you are interested in.

Hybrid tools

Using web technologies, it is very easy to combine streams of content and apps like Pulse have risen to the occasion.

Summary

Here are the important properties of each curation method:

Hand-curated fixed set of sources, we don’t miss any information

Social curate people with similar interests instead of news sources, swarm intelligence acting as a content-quality filter

Machine curate based on topics of interest, can take all other means of curation as an input

Relevance and Quality

Two metrics surface that can help compare curation methods. Relevance and quality. In hand-curation and social curation, relevance and quality are coupled in the selection process, however, machine curation starts with topical content it thinks is relevant and tries to extract the highest quality content out of that batch. This natural decoupling is an important feature of digital news distribution.

Streamlining your content streamlines

To understand how content moves between news channels, consider these diagrams.

RSS doesn’t need much of an explanation. Sources in, content out.

In Twitter we can distinguish between pure content sources and distributors, which tend to reshare or retweet content found somewhere else.

Prismatic uses both definite content sources and social networks for curation.

Designing your news stream

Finally, lets look at how we can leverage the properties of each of these channels to get a relevant and flexible news stream.

Given RSS’ “lossless” property, we reserve it for blogs and media where we expect to or require to read every single thing. For me, that’s a set of data blogs and then a few others that put posts out very rarely and are niche enough to never appear in curated streams. I will have a pass through Newsblur every two weeks or so. Google Reader would be a popular alternative.

I also read the Hacker News front page. It’s not a lot to read and is usually highly targeted. If your interests are outside tech, you might find an interesting subreddit or a mailing list.

Twitter is great to get a lot of content sources together. Many reddit channels and RSS feeds have twitter profiles. Facebook would be useful if you have similar interests to your friends. I find even when that is the case, my friends tend to post mostly entertainment or casual reading, so I prefer Twitter. Google+ is another option, but currently it may not integrate very well with aggregators. Consider it more of an end-point.

Finally I use Prismatic to fuzzily feed in a couple more content sources and all my topics of interest. It works surprisingly well and if you follow people who post relevant content, it will surface here and you won’t miss out on important news even if you do not read your whole Twitter stream (from what I understand hardly anybody does so these days, I certainly can’t). I really like the elasticity, you know that for however much time you have available, you will get the top news. Wavii is worth looking at if you need an alternative.

For the record, I mainly read either on my tablet or Mac and occasionally delegate to Readability.

I hope you’re inspired to at least give some of these services a good look and see if they would work for you. You know the concepts now and it’s just a question of fitting them to your interests and constraints.